Claude Opus 4.7: The New Flagship from Anthropic (April 2026)

Introducing Claude Opus 4.7

Our latest model, Claude Opus 4.7, is now generally available.

Opus 4.7 is a notable improvement on Opus 4.6 in advanced software engineering, with particular gains on the most difficult tasks. Users report being able to hand off their hardest coding work—the kind that previously needed close supervision—to Opus 4.7 with confidence. Opus 4.7 handles complex, long-running tasks with rigor and consistency, pays precise attention to instructions, and devises ways to verify its own outputs before reporting back.

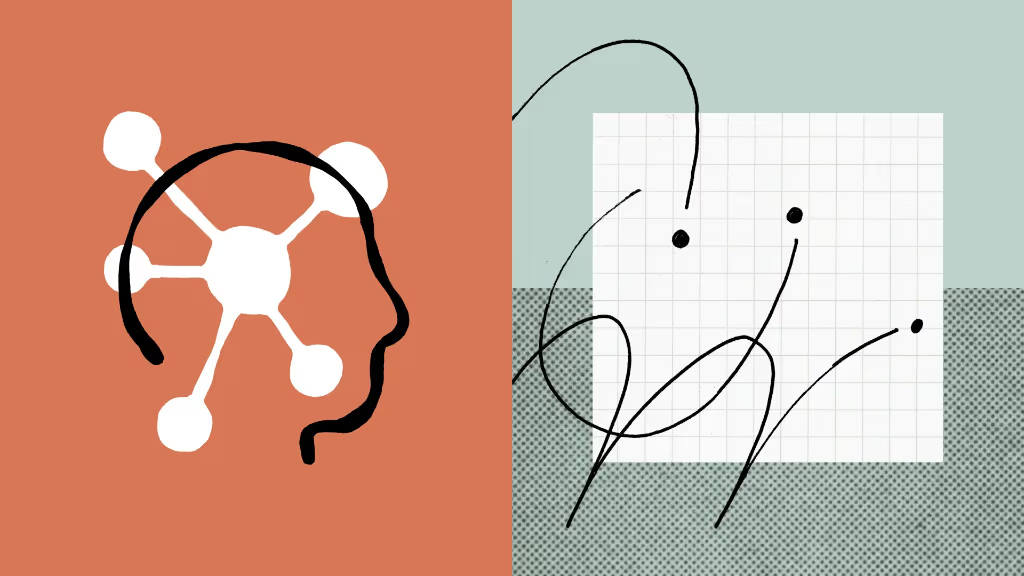

The model also has substantially better vision: it can see images in greater resolution. It’s more tasteful and creative when completing professional tasks, producing higher-quality interfaces, slides, and docs. And—although it is less broadly capable than our most powerful model, Claude Mythos Preview—it shows better results than Opus 4.6 across a range of benchmarks.

Comparison across Opus 4.7, Opus 4.6, GPT-5.4, Gemini 3.1 Pro, and Mythos Preview.

Last week we announced Project Glasswing, highlighting the risks—and benefits—of AI models for cybersecurity. We stated that we would keep Claude Mythos Preview’s release limited and test new cyber safeguards on less capable models first.

Opus 4.7 is the first such model: its cyber capabilities are not as advanced as those of Mythos Preview. We are releasing Opus 4.7 with safeguards that automatically detect and block requests that indicate prohibited or high-risk cybersecurity uses.

Opus 4.7 is available today across all Claude products and our API, Amazon Bedrock, Google Cloud’s Vertex AI, and Microsoft Foundry. Pricing remains the same as Opus 4.6: $5 per million input tokens and $25 per million output tokens.

Testing Claude Opus 4.7

Claude Opus 4.7 has garnered strong feedback from our early-access testers. Below are some highlights and notes from our early testing of Opus 4.7:

Instruction following

Opus 4.7 is substantially better at following instructions. Interestingly, this means that prompts written for earlier models can sometimes now produce unexpected results: where previous models interpreted instructions loosely or skipped parts entirely, Opus 4.7 takes the instructions literally. Users should re-tune their prompts and harnesses accordingly.

Improved multimodal support

Opus 4.7 has better vision for high-resolution images: it can accept images up to 2,576 pixels on the long edge (~3.75 megapixels), more than three times as many as prior Claude models. This opens up a wealth of multimodal uses that depend on fine visual detail: computer-use agents reading dense screenshots, data extractions from complex diagrams, and work that needs pixel-perfect references.

Real-world work

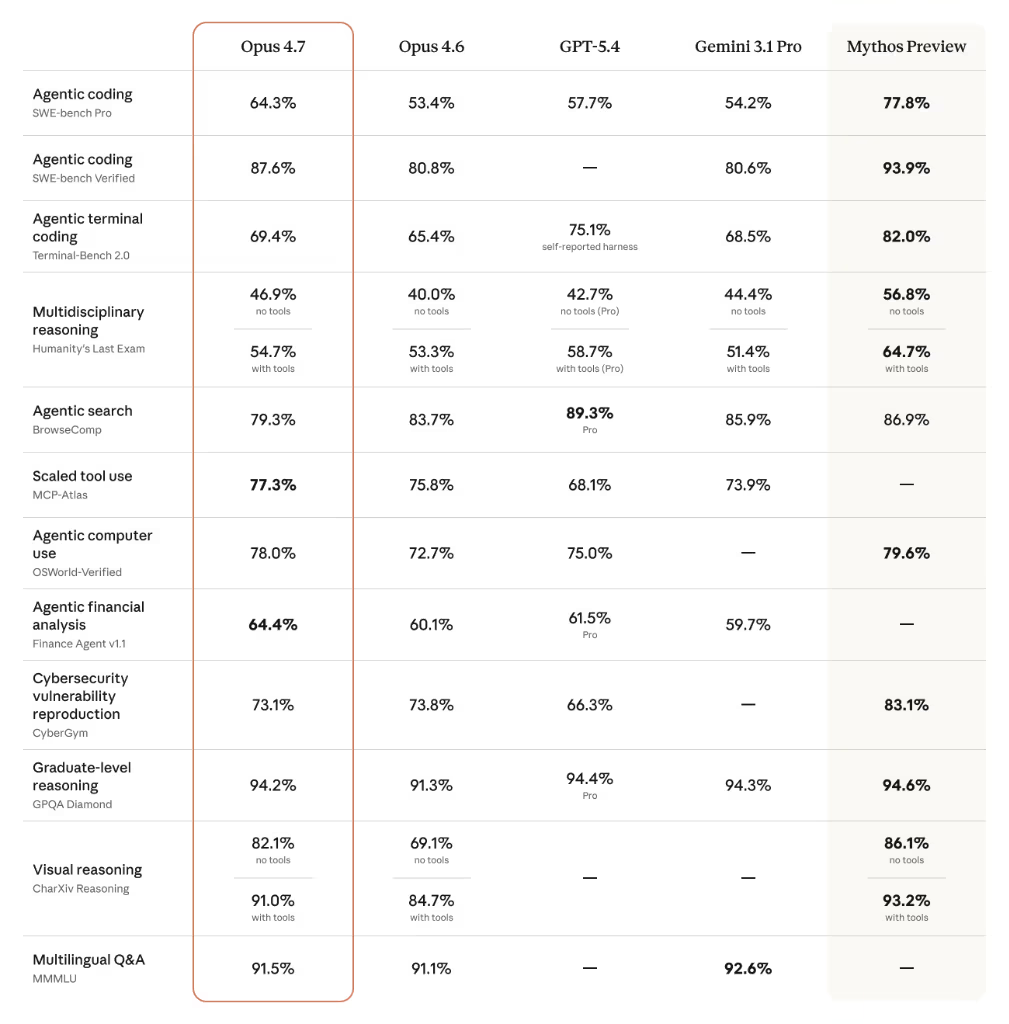

Our internal testing showed Opus 4.7 to be a more effective finance analyst than Opus 4.6, producing rigorous analyses and models, more professional presentations, and tighter integration across tasks. Opus 4.7 is also state-of-the-art on GDPval-AA, a third-party evaluation of economically valuable knowledge work across finance, legal, and other domains.

GDPVal-AA Elo scores: Opus 4.7 leads the field in economically valuable knowledge work.

Memory

Opus 4.7 is better at using file system-based memory. It remembers important notes across long, multi-session work, and uses them to move on to new tasks that, as a result, need less up-front context.

Safety and Alignment

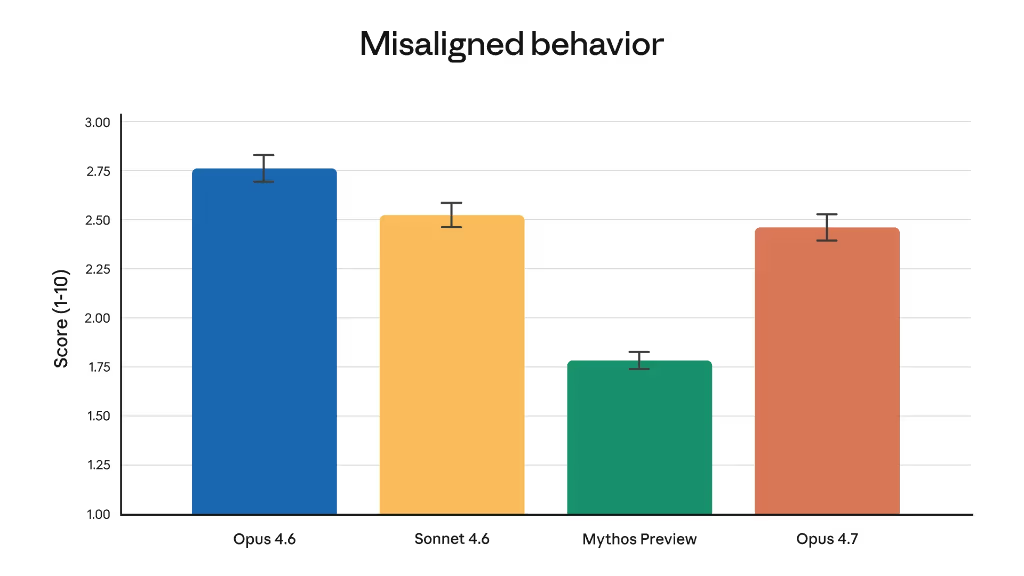

Overall, Opus 4.7 shows a similar safety profile to Opus 4.6: our evaluations show low rates of concerning behavior such as deception, sycophancy, and cooperation with misuse.

On some measures, such as honesty and resistance to malicious “prompt injection” attacks, Opus 4.7 is an improvement on Opus 4.6. Our alignment assessment concluded that the model is “largely well-aligned and trustworthy, though not fully ideal in its behavior”.

Automated behavioral audit: Opus 4.7 shows a modest improvement over 4.6, with Mythos Preview remaining the benchmark leader.

Also Launching Today

More effort control

Opus 4.7 introduces a new xhigh ("extra high") effort level between high and max, giving finer control over reasoning vs latency.

Task budgets (API)

Now in public beta, allowing developers to guide token spend so Claude can prioritize work across longer runs.

/ultrareview

New Claude Code command flags bugs and design issues that a careful human reviewer would catch.

In Claude Code, we’ve raised the default effort level to xhigh for all plans. We’ve also extended auto mode to Max users, where Claude makes decisions on your behalf for longer tasks with fewer interruptions.

Migrating from Opus 4.6 to Opus 4.7

Opus 4.7 is a direct upgrade to Opus 4.6, but two changes are worth planning for:

- Updated Tokenizer: Improved text processing may shift token mapping. Roughly 1.0–1.35× increase depending on the content type.

- Deeper Thinking: At higher effort levels, the model produces more output tokens to achieve its improved reliability on hard problems.

The net effect is favorable—token usage across all effort levels is improved on internal coding evaluations—but we recommend measuring the difference on real traffic using our migration guide.

Frequently Asked Questions

AI Tools Review Editorial Team Expert Verified

Our editorial team consists of veteran AI researchers, software engineers, and industry analysts. We spend hundreds of hours benchmarking frontier models natively to provide you with objective, actionable intelligence on agentic AI capabilities and cybersecurity landscapes.