Engram Architecture: Solving the LLM Memory Problem (2026)

For years, the Achilles heel of Large Language Models has been their inherent transience. A conversation with an LLM was like talking to an incredibly intelligent amnesiac—the moment the context window ended, everything was forgotten. Then came "Engram," a new architectural paradigm pioneered by DeepSeek in early 2026 that fundamentally rewrites how AI handles memory, moving from fragmented retrieval to deep, persistent structural knowledge. This guide breaks down exactly what Engram is and why it renders traditional RAG systems obsolete.

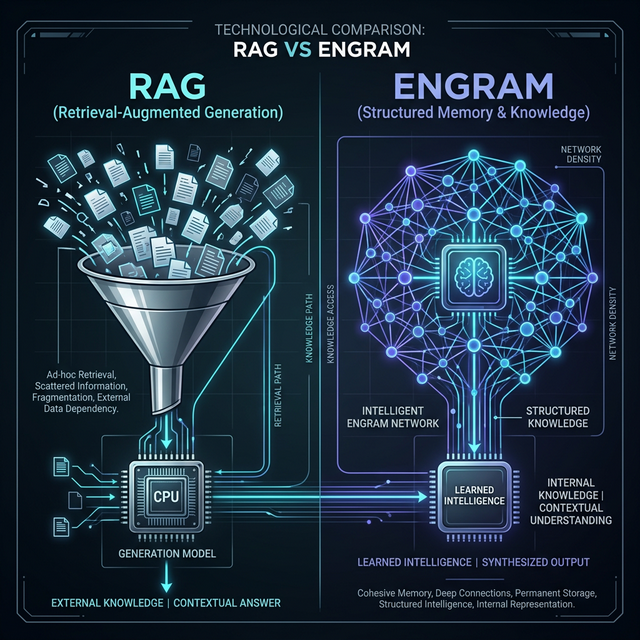

The Problem with RAG

To understand the magnitude of the Engram breakthrough, we first need to mourn the era of RAG (Retrieval-Augmented Generation). Throughout 2024 and 2025, if an enterprise wanted an AI to know its internal documents, it built a RAG pipeline.

RAG essentially breaks documents into chunks, converts them into numbers (embeddings), stores them in a vector database, and then searches that database whenever the user asks a question. The retrieved chunks are then pasted into the LLM's prompt.

RAG relies on external search and "stuffing" the context window, while Engram permanently updates the model's internal state.

This approach had critical flaws:

- Loss of Holistic Context: If you ask a RAG system, "Summarize the overarching theme across our last 3 years of financial strategy," it struggles. It can only retrieve specific chunks matching "financial strategy," missing the subtle connective tissue established across hundreds of separate documents.

- The Context Window Bottleneck: You can only paste so much retrieved information into the prompt before the model begins to hallucinate, slow down, or simply ignore instructions in the middle of the text (the "lost in the middle" phenomenon).

- High Latency and Cost: Running constant database searches, encoding text, and processing bloated prompts is computationally expensive and slow.

What is an Engram?

The word "engram" in neuroscience refers to the physical trace of a memory in the brain. DeepSeek's Engram Architecture borrows this concept. Instead of storing external documents in a separate database, Engram allows the LLM to structurally modify a localized, distinct portion of its own neural weights in real-time as it processes new information.

The DeepSeek Engram behaves like a localized, continuously trained neural network layered on top of the base LLM.

When an Engram-enabled agent reads an enterprise codebase or a user's daily slack messages, it isn't just saving text blocks. It is actively learning the concepts, the relationships between the developers, the architecture patterns, and the company vernacular. This knowledge is baked into a massive, highly efficient state tensor (the "Engram Core") that persists independently of the conversation context window.

Continuous Pre-training vs. Context Injection

Think of it this way: RAG is like giving a student an open-book test where they have to frantically search an encyclopedia for the answer. Engram is like the student having actually studied and memorized the material the night before. The knowledge is native, instant, and synthesized.

How Engram Architecture Works

Achieving infinite, structural memory without requiring a full (and impossibly expensive) model fine-tuning process required several breakthroughs in machine learning architecture.

1. The Differential Memory Layer

Under the hood, DeepSeek isolated a specific attention head mechanism designed solely for continual learning. This "Differential Memory Layer" intercepts new information during inference, calculates the delta between what the model already knows and the new data, and applies a highly compressed weight update to a localized memory block.

2. Temporal Tagging & Decay

A major issue with persistent memory is that facts change. (e.g., "The UI button is blue" becomes false when "The UI button is now red"). Engram solves this inherently through temporal decay and reinforcement. Concepts that are heavily reinforced grow strong connections. Contradictory new information triggers a deliberate update to the neural pathway, effectively "changing the model's mind" while retaining the historical context of when the change occurred.

3. Zero-Latency Retrieval

Because the Engram is essentially an extension of the model's own weights, accessing this memory doesn't require a database query. Retrieval happens natively during the standard forward pass of the neural network. This results in "zero-latency" recall, making the model incredibly fast even when referencing terabytes of internal enterprise data.

The Persistent Agentic Ecosystem

The primary driver for the Engram architecture wasn't just better enterprise search; it was the prerequisite for true Agentic Workflows.

Engram enables AI companions that maintain a continuous, evolving understanding of your specific workspace over years, not just within a single chat session.

Imagine a junior developer, "Alice," working at a company for two years. She knows that "Project Phoenix" relies on a legacy database, she knows Sarah prefers Slack over Email, and she remembers the exact edge-case bug that crashed the server last Christmas. This holistic, interwoven understanding of an environment cannot be simulated by chunking documents into a vector database.

By plugging an Engram core into an agent like Claude CoWork or local frameworks like Moltbot, the AI becomes a true continuous colleague. It observes the environment, structures the knowledge natively, and applies that deep context to every task it undertakes months or years later.

Security: The Local Engram Paradigm

One of the most profound implications of the Engram structure is data sovereignty. In a RAG setup, your data still has to be sent out to the frontier model in the prompt payload, raising massive security concerns for enterprise IP.

With the Engram architecture, the physical file storing the memory (the Engram state tensor) can be decoupled from the base LLM. An enterprise can host the base open-weights model on a server cluster, and assign individual, encrypted Engram files to specific users or teams.

- Ultimate Privacy: The memory file never leaves the user's local network or dedicated hardware.

- Portable Intelligence: An employee can theoretically transfer their personalized AI companion's "Engram" from a work Macbook to an enterprise server seamlessly, retaining all specific context.

Conclusion: The End of AI Amnesia

The introduction of DeepSeek's Engram architecture marks the definitive end of the "stateless" AI era. By moving from externalized database retrieval to internalized structural memory, AI has crossed a critical threshold toward mimicking human cognition.

As this technology permeates the industry throughout 2026, the competitive advantage will no longer belong to the companies with the best prompts or the largest RAG databases, but to those who have "trained" their persistent AI digital twins longest and deepest within their own operational environments. The age of the truly intelligent, permanent digital colleague has arrived.

Related Articles

AI Tools Review Editorial Team Expert Verified

Our editorial team consists of veteran AI researchers, software engineers, and industry analysts. We spend hundreds of hours benchmarking frontier models natively to provide you with objective, actionable intelligence on agentic AI capabilities and cybersecurity landscapes.