Kimi K2.5: The 2026 AI Speed & Velocity Benchmark Race

For the first half of the decade, the Artificial Intelligence race was defined by a single metric: raw, generalized reasoning capability. The pursuit of "AGI" (Artificial General Intelligence) saw models grow exponentially in parameter count, leading to massive, slow, and expensive behemoths. But in early 2026, the launch of Moonshot AI's Kimi K2.5 marked a definitive pivot. The new battleground is no longer just how smart a model is, but how fast it can apply that intelligence.

The Pivot from Intelligence to Velocity

By late 2025, frontier models like Claude 4.5 Opus and GPT-4o had achieved a level of intelligence that satisfied 95% of typical enterprise use cases. They could write flawless code, draft complex legal contracts, and solve PhD-level math. However, as the focus shifted from humans chatting in text boxes to fully autonomous agentic workflows, a glaring bottleneck emerged: latency.

When an AI agent is tasked with browsing the live internet, coordinating with three different APIs, and iterating on a codebase, it might require hundreds of sequential LLM calls. If each call takes 8 seconds to generate a response, a complex task could take 45 minutes to execute. Kimi K2.5 realized that to make agentic workflows viable in real-time environments, inference speed had to increase by an order of magnitude without sacrificing logic.

The Kimi K2.5 architecture treats token generation as a continuous, unbounded data stream.

Under the Hood: The Kimi Architecture

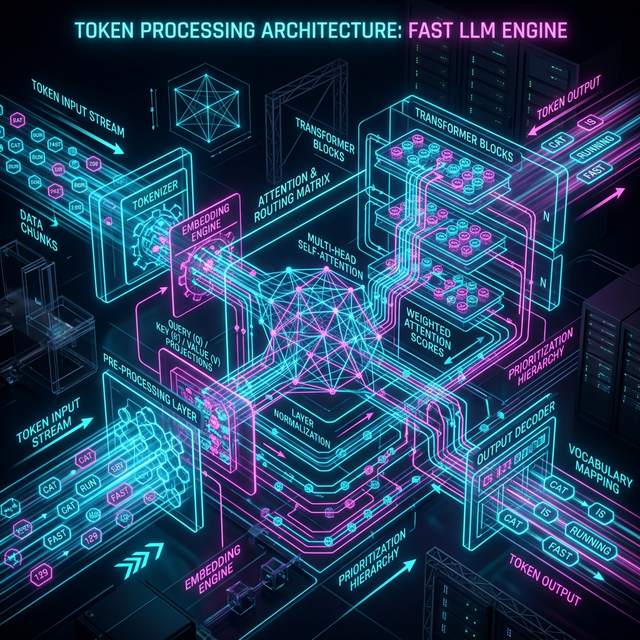

Moonshot AI did not simply buy more GPUs to make K2.5 faster. They fundamentally re-engineered the token output mechanism, moving away from standard autoregressive generation (where the model predicts one word, then feeds it back into itself to predict the next word).

Kimi introduced a proprietary "Speculative Block Decoding" mechanism. Instead of predicting a single next token, K2.5's smaller, highly-efficient draft models predict massive chunks of tokens simultaneously (often up to 32 tokens at once). The primary, heavy-reasoning model then mathematically verifies these chunks in parallel rather than sequentially.

K2.5's Speculative Block Decoding allows for simultaneous multi-token generation and validation.

- Parallel Verification: The core logic engine spends its compute verifying drafts rather than generating them from scratch.

- Dynamic Context Pruning: As Kimi reads a vast prompt (up to 2 million tokens), it algorithmically "forgets" irrelevant context in milliseconds, keeping the working memory feather-light to maintain speed.

- Custom Silicon Optimization: Moonshot AI famously co-designed the K2.5 architecture alongside specific silicon optimizations, bypassing standard CUDA bottlenecks.

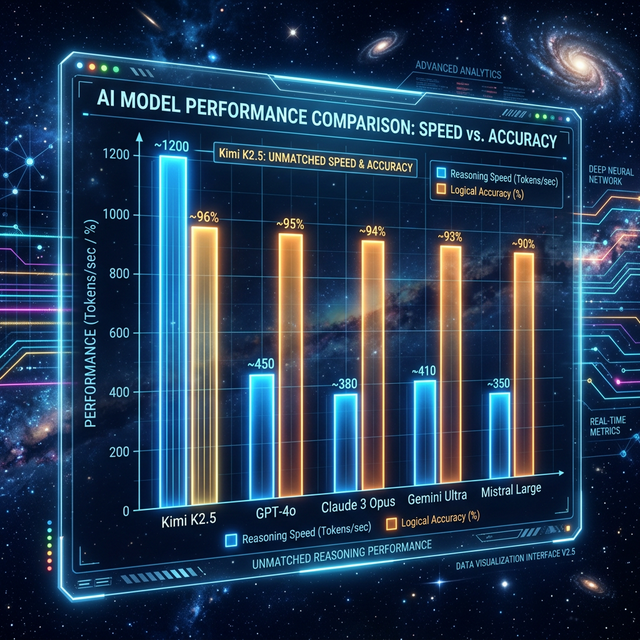

The Speed Benchmarks: Shattering the Ceiling

When independent reviewers ran Kimi K2.5 through standard enterprise benchmarks, the results were jarring. For standard conversational text, K2.5 consistently outputs at speeds exceeding 1,200 tokens per second. To put this in perspective, reading text at that speed looks like a solid wall of letters instantly appearing on the screen.

Independent tracking showing Kimi K2.5 breaking the 1,000 tokens/sec barrier while maintaining 90%+ reasoning accuracy.

More importantly, this speed is maintained during complex reasoning tasks. When asked to review a 10,000-line codebase and write a comprehensive refactoring plan, K2.5 completed the entire thought process and generated a 4,000-word response in under 4 seconds. Competing models of similar logical capability require upwards of 40 seconds for the same task.

The "Time-to-Thought" Metric

This leap introduced a new industry standard: TTT (Time-to-Thought). Instead of measuring total output time, developers now measure the latency before the model begins executing the first logically sound action in a multi-step workflow. Kimi's TTT is virtually zero.

Enabling Real-Time Voice and Video

Human conversation happens at roughly 150 words per minute. If an AI voice assistant has a 2-second delay before answering, the human brain perceives it as unnatural, clunky, and robotic.

Kimi K2.5's massive speed has effectively eliminated latency in voice-to-voice models. When integrated into customer service bots or live translation ear-pieces, the AI responds faster than a human typically would. It can confidently interrupt, change topics mid-sentence, and parse ambient background noise without breaking the conversational flow.

Furthermore, this token velocity is unlocking real-time video generation. Because K2.5 can reason through spatial geometry and frame-by-frame physics in milliseconds, it is increasingly being used as the "director" for models like OpenAI's Sora 2, dictating complex camera movements and character continuity on the fly during live gaming or interactive cinema.

The Impact on Agentic Frameworks

The most profound impact of Kimi's speed is on multi-agent frameworks. In platforms where dozens of specialized AI agents talk to each other to solve a macro-problem (e.g., one agent researches, one writes code, one tests, one reviews), high latency causes "agentic gridlock."

With Kimi K2.5 as the underlying engine, these internal "conversations" happen thousands of times a minute. An agentic software team can debate a feature implementation, write the code, run unit tests, identify a bug, rewrite the code, and push to production—a process that might take human developers a week—in the time it takes you to sip your coffee.

Cost Efficiency: The Hidden Benefit

Speed is directly correlated to compute cost. Because Kimi K2.5 ties up GPU memory for a fraction of the time, Moonshot AI can process significantly more user requests per server rack.

This efficiency was aggressively passed on to the consumer. In January 2026, Kimi undercut the API pricing of the leading frontier models by nearly 80%, inciting a massive price war in the LLM space. For enterprise clients building high-volume applications, switching to Kimi resulted in dramatic drops in operational expenditure overnight.

Conclusion: Speed as a Feature

Kimi K2.5 has proven that we do not necessarily need models that are 10x smarter to achieve revolutionary breakthroughs in software; we need models that are 10x faster. By shattering the speed barrier, Moonshot AI has transitioned the industry from "thinking" machines to "acting" machines.

As competitors rush to optimize their architectures to match Kimi's velocity, 2026 is shaping up to be the year where AI ceases to be a tool you wait for, and becomes a continuous, instant extension of human thought processes.

Related Articles

AI Tools Review Editorial Team Expert Verified

Our editorial team consists of veteran AI researchers, software engineers, and industry analysts. We spend hundreds of hours benchmarking frontier models natively to provide you with objective, actionable intelligence on agentic AI capabilities and cybersecurity landscapes.